One of the biggest trends in mixed reality this year is the arrival of chatbots on platforms like HoloLens. Speech commands are a common input for many XR devices. Adding conversational AI to extend these native speech recognition capabilities is a natural next steps toward a future in which personalized virtual assistant backed by powerful AI accompany us in hologram form. They may be relegated to providing us with shopping suggestions, but perhaps, instead, they’ll become powerful custom tools that help make us sharper, give honest feedback, and assist in achieving our personal goals.

If you have followed the development of sci-fi artificial intelligence in television and movies over the years, the move from voice to full holograms will seem natural. In early sci-fi, such as HAL from the movie 2001: A Space Odyssey or the computer from the original Star Trek, computer intelligence was generally represented as a disembodied voice. In more recent incarnations of virtual assistance, such as Star Trek Voyager and Blade Runner 2049, these voices are finally personified by full holograms of the Emergency Medical Hologram and Joi.

In a similar way, Cortana, Alexa, and Siri are slowly moving from our smartphones, Echos, and Invoke devices to our holographic headsets. These are still early days, but the technology is already in place and the future incarnation of our virtual assistants is relatively clear.

The rise of the chatbot

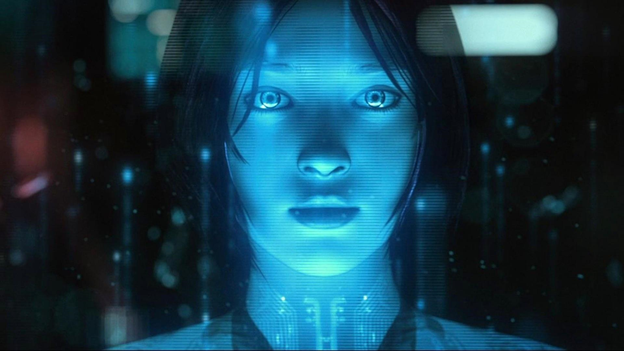

For Microsoft’s personal digital assistant Cortana, who started her life as a hologram in the Halo video games for Xbox, the move to holographic headsets is a bit of a homecoming. It seems natural, then, that when Microsoft HoloLens was first released in 2016, Cortana was already built into the onboard holographic operating system.

Then, in a 2017 article on the Windows Apps Team blog, Building the Terminator Vision HUD in HoloLens, Microsoft showed people how to integrate Azure Cognitive Services into their holographic head-mounted display in order to provide smart object recognition and even translation services as a Terminator-like HUD overlay.

The only thing left to do to get to a smart virtual assistant was to tie together the HoloLens’s built-in Cortana speech capabilities with some AI to create an interactive experience. Not surprisingly, Microsoft was able to fill this gap with the Bot Framework.

Virtual assistants and Microsoft Bot Framework

Microsoft Bot Framework combines AI backed by Azure Cognitive Serviceswith natural-language capabilities. It includes a set of open source SDKs and tools that enable developers to build, test, and connect bots that interact naturally with users. With the Microsoft Bot Framework, it is easy can create a bot that can speak, listen, understand, and even learn from your users over time with Azure Cognitive Services. This chatbot technology is sometimes referred to as conversational AI.

There are several chatbot tools available. I am most familiar with the Bot Framework, so I will be talking about that. Right now, chatbots built with the Bot Framework can be adapted for speech interactions or for text interactions like the UPS virtual assistant example above. They are relatively easy to build and customize using prepared templates and web-based dialogs.

One of my favorite ways to build a chatbot is by using QnA Maker, which lets you simply point to an online FAQ page or upload product documentation to use as the knowledge base for your bot service. QnA Maker then walks you through applying a chatbot personality to your knowledge base and deploying it, usually with no custom coding. What I love about this is that you can get a sophisticated chatbot rolled out in about half a day.

Using the Microsoft Bot Framework, you also have the ability to take full control of the creation process to customize your bot in code. Bot apps can be created in C#, JavaScript, Python or Java. You can extend the capabilities of the Bot Framework with middleware that you either create yourself or bring into your code from third parties. There are even advanced capabilities available for managing complex conversation flows with branches and loops.

Ethical chatbots

Having introduced the idea above of building a Terminator HUD using Cognitive Services, it’s important to also raise awareness about fostering an environment of ethical AI and ethical thinking around AI. To borrow from the book The Future Computed, AI systems should be fair, reliable and safe, private and secure, inclusive, transparent, and accountable. As we build all forms of chatbots and virtual assistants, we should always consider what we intend our intelligent systems to do, as well as concern ourselves with what they might do unintentionally.

The ultimate convergence of AI and mixed reality

Today, chatbots are geared toward integrating skills for commerce like finding directions, locating restaurants, and providing help with a company’s products through virtual assistants. One of the chief research goals driving better chatbots is to personalize the chatbot experience. Achieving a high level of personalization will require extending current chatbots with more AI capabilities. Fortunately, this isn’t a far-future thing. As shown in the Terminator HUD tutorial above, adding Cognitive Services to your chatbots and devices is easy to do.

Because holographic headsets have many external sensors, AI will also be useful for analyzing all this visual and location data and turning it into useful information through the chatbot and Cognitive Services. For instance, cameras can be used to help translate street signs if you are in a foreign city or to identify products when you are shopping and provide helpful reviews.

Finally, AI will be needed to create realistic 3D model representations of your chatbot and overcome the uncanny valley that is currently holding back VR, AR, and MR. When all three elements are in place to augment your chatbot — personalization, computer vision, and humanized 3D modeling — we’ll be that much closer to what we’ve always hoped for — personalized AI that looks out for us as individuals.

Here is some additional reading on the convergence of chatbots and MR you will find helpful: